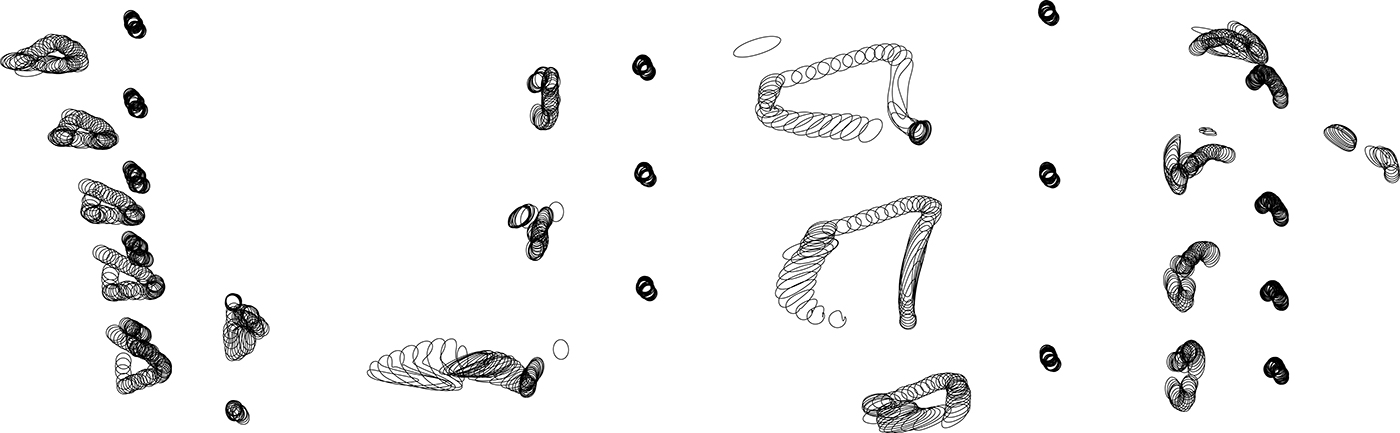

Models of cartwheel installations by Eli Silver

Inspired by the work of Vladislav Petrovskiy, Geoffrey Mann, and Shinichi Maruyama, who use various mediums to capture bodies in motion. Struck by the lack of tools available to generate these sculptures procedurally, we sought to create our own tools to generate art. We wanted to use a familiar form, the human body, to make unfamiliar forms through technology that still feel organic, human, and active, elevating typically mundane activities to reach artistic value and physically altering a space to evoke intrigue. Our response to "Why Human" explores the collaboration of technology and the human form to speed up the process of artistic creation, using the human body as our paintbrush and our tangible world as our media.

Our scripts were written in Python with Rhino's Python scripting API, processing .csv files exported from Axis Neuron's Perception Neuron data. Our process included translating data points from motion capture technology into XYZ coordinates, which we were able to manipulate in a 3-D modeling software.

Cross Sections of cartwheel motion form.

Concept sketches for installation placement and implementation

Example action of a cartwheel while using Perception Neuron motion capture suit.

The same cartwheel realized digitally in the Axis Neuron software.

Exploring the intersection of art and technology, I helped with design process overview and initial installation concept rendering. To create visual representations of human movement, I learned how to operate Axis Neuron software and Perception Neuron motion capture technology, and provided input on how modeled forms could be implemented into a physical space.

Award recipient at the 2017 Hacking Arts @ MIT event, located at the MIT Media Lab.